[ad_1]

Upon looking at images and drawing on their earlier experiences, people can often perceive depth in pics that are, themselves, properly flat. Even so, getting personal computers to do the same factor has proved rather difficult.

The issue is difficult for numerous explanations, one getting that info is inevitably dropped when a scene that takes spot in 3 proportions is minimized to a two-dimensional (2D) illustration. There are some nicely-set up methods for recovering 3D information and facts from multiple 2D visuals, but they each have some restrictions. A new technique known as “virtual correspondence,” which was formulated by scientists at MIT and other institutions, can get close to some of these shortcomings and succeed in instances where by conventional methodology falters.

The normal strategy, named “structure from motion,” is modeled on a important facet of human vision. Since our eyes are separated from just about every other, they every offer you slightly different views of an item. A triangle can be shaped whose sides consist of the line section connecting the two eyes, in addition the line segments connecting just about every eye to a widespread position on the object in problem. Understanding the angles in the triangle and the distance in between the eyes, it is feasible to figure out the length to that place working with elementary geometry—although the human visible technique, of system, can make rough judgments about length without acquiring to go as a result of arduous trigonometric calculations. This similar standard idea—of triangulation or parallax views—has been exploited by astronomers for centuries to determine the distance to faraway stars.

Triangulation is a crucial aspect of framework from movement. Suppose you have two pics of an object—a sculpted figure of a rabbit, for instance—one taken from the left side of the determine and the other from the appropriate. The initially move would be to locate details or pixels on the rabbit’s surface that equally photographs share. A researcher could go from there to ascertain the “poses” of the two cameras—the positions where by the pics were being taken from and the way each digital camera was going through. Recognizing the length amongst the cameras and the way they were being oriented, 1 could then triangulate to work out the length to a selected place on the rabbit. And if ample typical details are recognized, it could possibly be doable to attain a in-depth sense of the object’s (or “rabbit’s”) overall form.

Significant development has been created with this technique, remarks Wei-Chiu Ma, a Ph.D. college student in MIT’s Section of Electrical Engineering and Computer system Science (EECS), “and individuals are now matching pixels with increased and bigger accuracy. So prolonged as we can notice the exact same place, or details, across various photographs, we can use current algorithms to decide the relative positions in between cameras.” But the method only performs if the two photographs have a big overlap. If the enter pictures have incredibly different viewpoints—and that’s why incorporate couple of, if any, details in common—he provides, “the method may possibly fail.”

Throughout summer months 2020, Ma arrived up with a novel way of executing items that could drastically grow the get to of construction from motion. MIT was shut at the time thanks to the pandemic, and Ma was residence in Taiwan, comforting on the couch. Although on the lookout at the palm of his hand and his fingertips in individual, it transpired to him that he could obviously image his fingernails, even however they had been not visible to him.

https://www.youtube.com/enjoy?v=LSBz9-TibAM

That was the inspiration for the notion of digital correspondence, which Ma has subsequently pursued with his advisor, Antonio Torralba, an EECS professor and investigator at the Computer Science and Synthetic Intelligence Laboratory, together with Anqi Joyce Yang and Raquel Urtasun of the College of Toronto and Shenlong Wang of the College of Illinois. “We want to incorporate human information and reasoning into our present 3D algorithms,” Ma says, the exact reasoning that enabled him to look at his fingertips and conjure up fingernails on the other side—the facet he could not see.

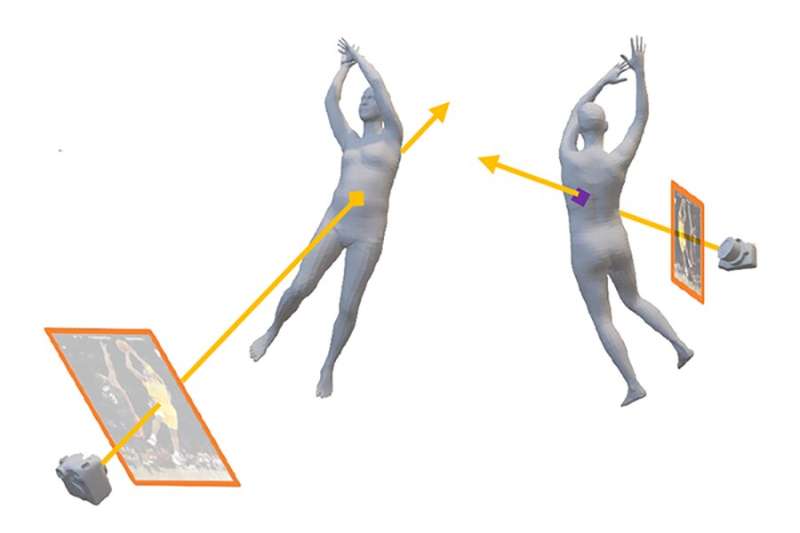

Composition from motion performs when two photos have points in typical, since that signifies a triangle can usually be drawn connecting the cameras to the widespread level, and depth data can thereby be gleaned from that. Virtual correspondence delivers a way to carry items even further. Suppose, the moment once again, that a single photograph is taken from the remaining side of a rabbit and another photograph is taken from the right side. The initially photo might expose a place on the rabbit’s still left leg. But due to the fact mild travels in a straight line, a person could use typical understanding of the rabbit’s anatomy to know where by a light-weight ray likely from the camera to the leg would arise on the rabbit’s other side. That place may well be visible in the other impression (taken from the ideal-hand facet) and, if so, it could be made use of by way of triangulation to compute distances in the third dimension.

Digital correspondence, in other phrases, enables just one to take a position from the initial graphic on the rabbit’s still left flank and hook up it with a level on the rabbit’s unseen proper flank. “The advantage right here is that you don’t have to have overlapping images to continue,” Ma notes. “By on the lookout by the item and coming out the other stop, this strategy delivers factors in common to do the job with that weren’t initially accessible.” And in that way, the constraints imposed on the standard approach can be circumvented.

One could possibly inquire as to how much prior understanding is required for this to get the job done, simply because if you had to know the form of all the things in the graphic from the outset, no calculations would be required. The trick that Ma and his colleagues use is to use specified common objects in an image—such as the human form—to provide as a variety of “anchor,” and they have devised solutions for utilizing our expertise of the human form to support pin down the camera poses and, in some situations, infer depth within just the image. In addition, Ma describes, “the prior expertise and popular sense that is created into our algorithms is 1st captured and encoded by neural networks.”

The team’s greatest aim is significantly far more bold, Ma claims. “We want to make desktops that can understand the a few-dimensional environment just like individuals do.” That objective is nevertheless significantly from realization, he acknowledges. “But to go over and above exactly where we are currently, and create a technique that functions like humans, we require a a lot more tough location. In other phrases, we require to produce computer systems that can not only interpret still images but can also fully grasp quick video clips and sooner or later complete-duration movies.”

A scene in the film “Great Will Looking” demonstrates what he has in mind. The viewers sees Matt Damon and Robin Williams from driving, sitting down on a bench that overlooks a pond in Boston’s Community Backyard garden. The next shot, taken from the opposite aspect, gives frontal (even though entirely clothed) views of Damon and Williams with an fully distinctive qualifications. Absolutely everyone observing the movie straight away understands they are viewing the same two folks, even however the two photographs have practically nothing in popular. Computer systems cannot make that conceptual leap still, but Ma and his colleagues are working tough to make these devices more adept and—at the very least when it will come to vision—more like us.

The team’s work will be offered up coming 7 days at the Conference on Pc Eyesight and Pattern Recognition.

This story is republished courtesy of MIT Information (website.mit.edu/newsoffice/), a popular web-site that addresses news about MIT investigate, innovation and teaching.

Citation:

Pc eyesight procedure to enhance 3D knowledge of 2D images (2022, June 20)

retrieved 20 June 2022

from https://techxplore.com/news/2022-06-eyesight-approach-3d-2d-photographs.html

This document is subject matter to copyright. Apart from any honest dealing for the reason of private research or investigate, no

part may possibly be reproduced without having the penned authorization. The written content is delivered for information purposes only.

[ad_2]

Source connection